🎙️ Podcast - Audio Summary

📺 Video Summary

📑 Slides

📝 Deep Dive: Stop Making AI Work Like Humans. Make AI Work Like AI.

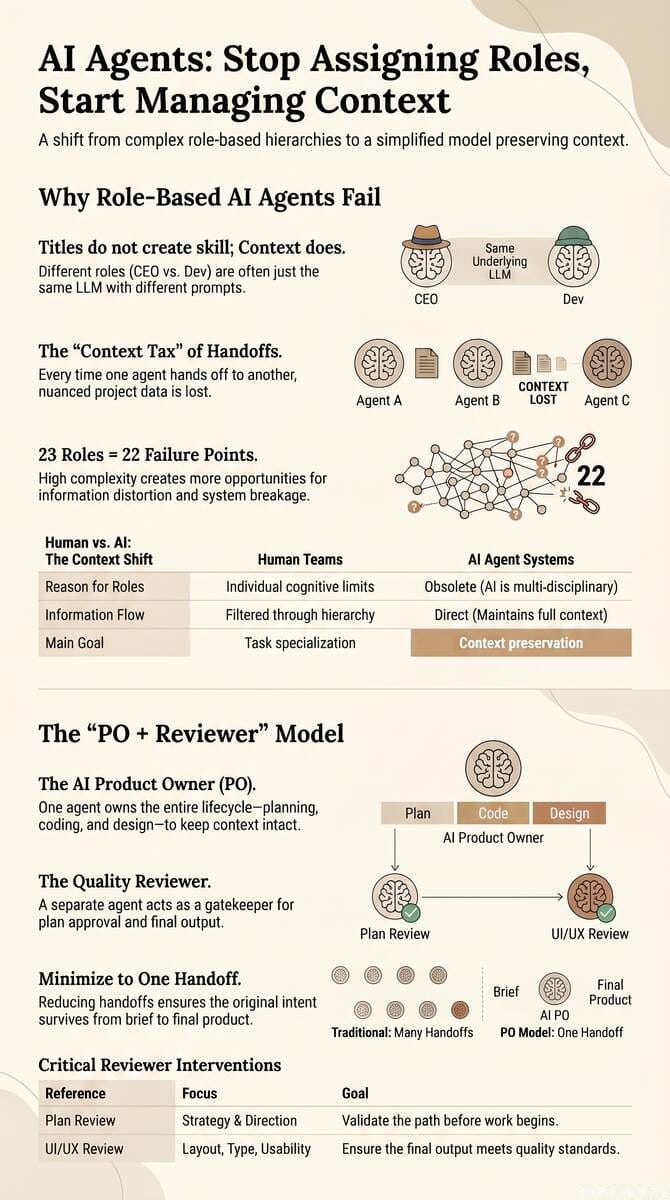

TL;DR: Most AI teams fail because they copy human org charts into agent workflows. Role-based handoffs create context decay, slower execution, and higher coordination overhead. This post shows an AI-native alternative: a lean PO + Reviewer model that preserves context and improves speed, quality, and reliability.

Introduction: The "Org Chart" Trap

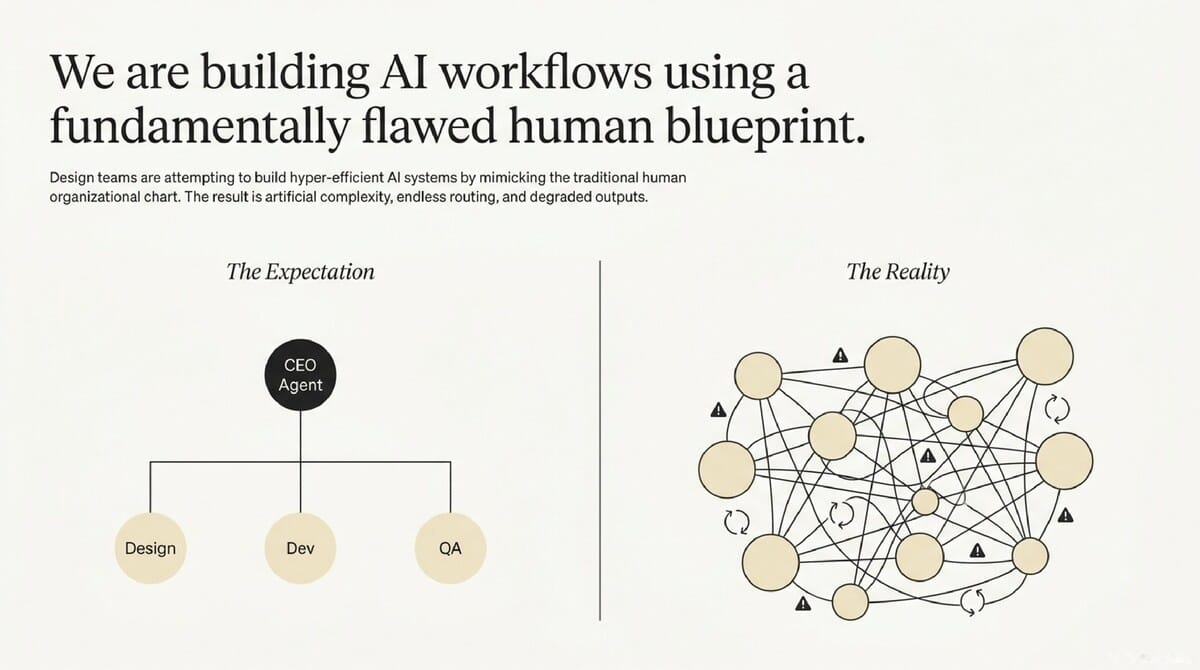

Design leaders and product managers are increasingly spending weeks architecting complex "agentic" workflows within their organizations. These leaders are building digital mirror images of their own departments, assigning specific titles to different AI instances and expecting a collaborative powerhouse. However, the reality is often a series of shallow, disconnected outputs that require more "babysitting" from human leads than the manual work they were supposed to replace.

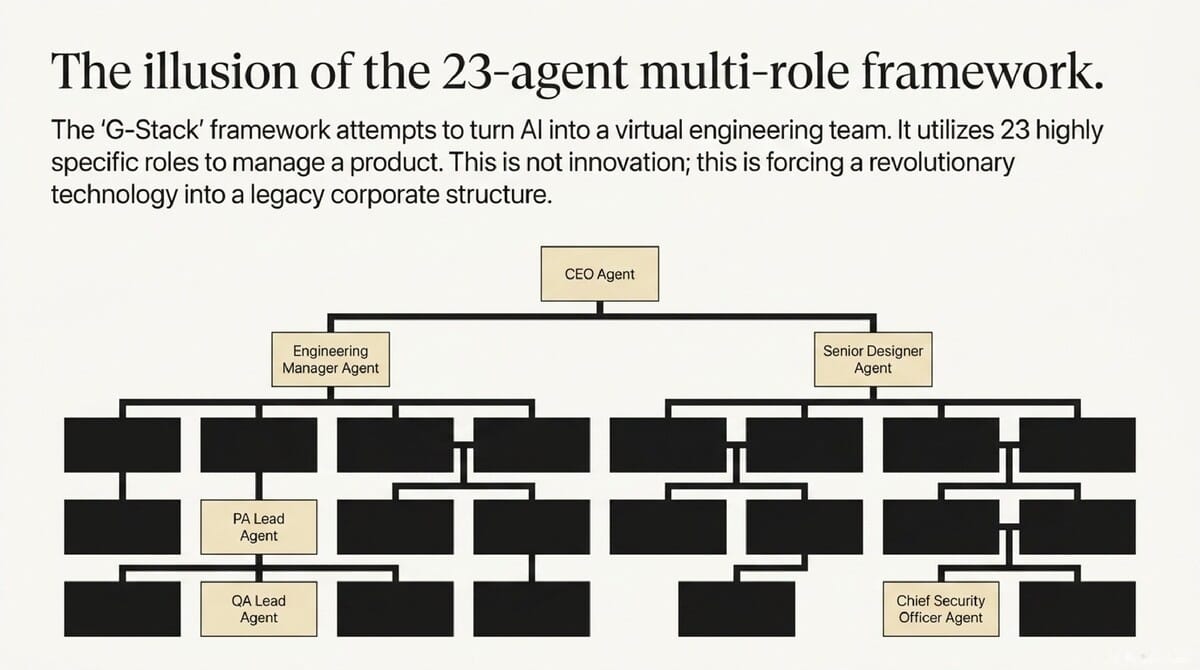

The excitement surrounding frameworks like "G-Stack" is understandable from a scaling perspective. The promise of an instant, virtual engineering team—complete with a CEO, a Senior Designer, and a QA Lead—sounds like the ultimate solution for high-velocity product development. Yet, the frustration sets in when the "CEO Agent" fails to act with the strategic depth of a human executive and the "Designer Agent" ignores the technical constraints defined by the "Engineer Agent."

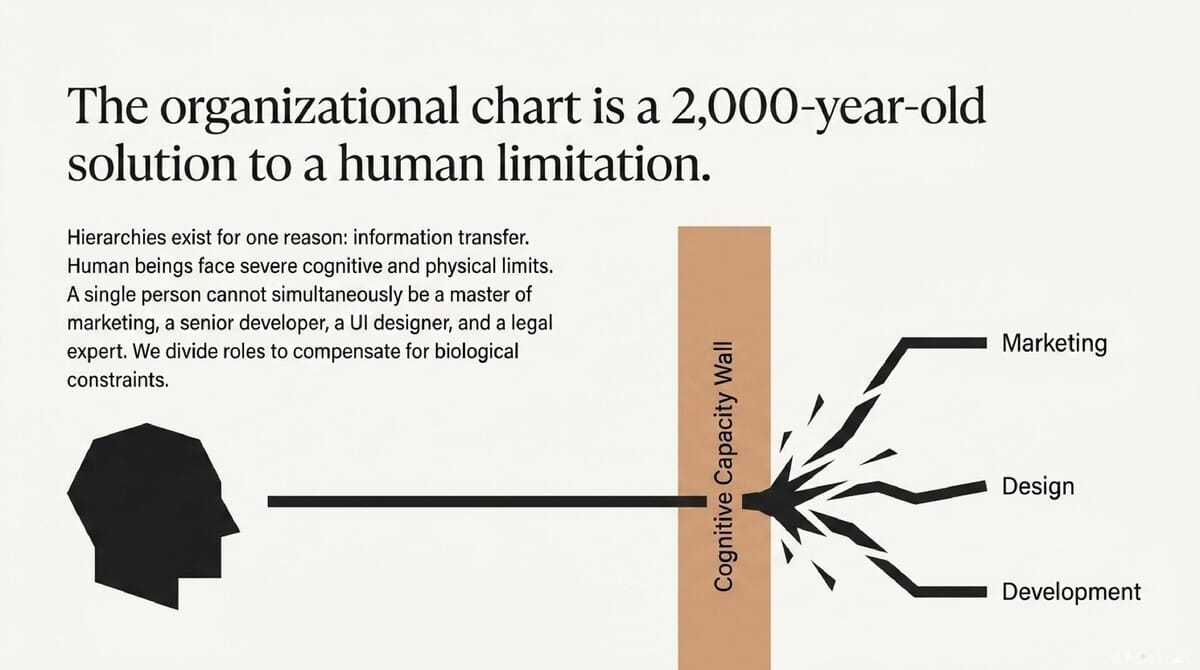

The fundamental issue is that we are forcing AI to solve 2,000-year-old human problems. We are applying organizational structures designed specifically to mitigate human information transfer issues to a technology that does not share our biological constraints. This "Org Chart" trap creates a massive "handoff tax" that slows down Design Ops and degrades the quality of the final product.

Takeaway #1: Stop Applying Human Cognitive Limits to AI

Why your team structure is a 2,000-year-old solution to a problem AI doesn't have.

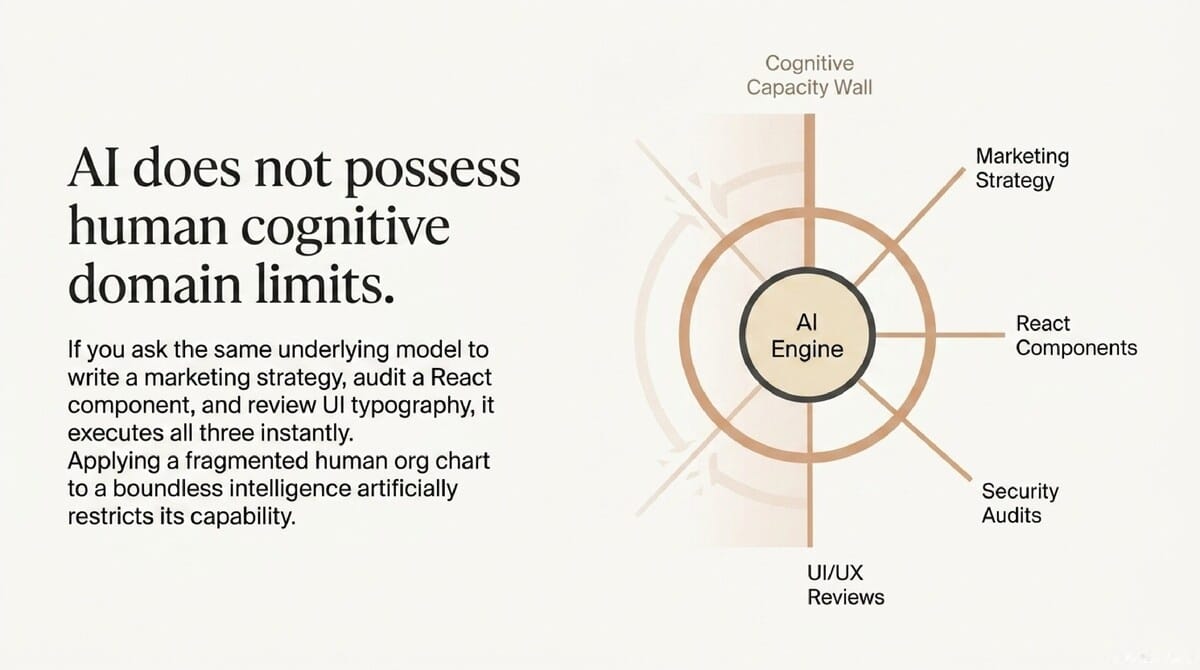

Human hierarchies were not born out of a desire for bureaucracy; they were created as a functional workaround for human cognitive limits. Historically, as organizations grew, it became physically impossible for one person to process all the information required to run a company. No single human can maintain deep expertise in marketing, React component architecture, accessibility compliance, and financial law simultaneously.

For a Design Lead, trying to "compartmentalize" an AI into a specific role actually nerfs its greatest strength: the ability to synthesize across domains instantly. Human specialization is a necessity driven by limited bandwidth, but AI is a natural generalist. When you force a model into a "Designer" role, you are effectively putting blinders on a system that is naturally built for high-level overview and detail-oriented execution.

Human Cognitive Limits vs. AI Generalist Reality

- Human Specialist: Possesses deep expertise in one area but has limited bandwidth for other domains. Transferring knowledge between specialists requires time and inevitably results in "lossy" data transmission.

- AI Generalist: Maintains broad context across all training data simultaneously. It can switch between drafting a React component, auditing a security flaw, and suggesting a UI color palette in a single session without losing detail or nuance.

Leadership Impact: Managing the Design System In Design Ops, this realization is transformative for managing complex assets like token libraries. A human specialist might struggle to see how a small change in a color token affects the accessibility of a specific UI component and the performance of the underlying CSS. An AI generalist, however, can analyze the design token, the component code, and the accessibility documentation as a single, unified data set.

By forcing AI into roles, you are treating its vast knowledge base as a liability rather than an asset. You are effectively paying the cost of specialization—increased handoffs and fragmented context—without gaining any of the benefits. True AI strategy involves removing these artificial silos to allow the model to act as a cross-functional executor.

Takeaway #2: Titles Don't Create Capability—Context Does

The tech industry often gets distracted by high-profile endorsements of complex, multi-role agent structures. For example, the G-Stack framework has gained significant attention for its approach to virtualizing an entire C-suite and engineering department.

"G-Stack makes AI into a virtual engineering team... a CEO agent to rethink the product, an Engineering Manager to lock the architecture, and a Senior Designer to grade the design." — Gary Tan, President of Y Combinator.

The "CEO" prompt is just Claude in a suit.

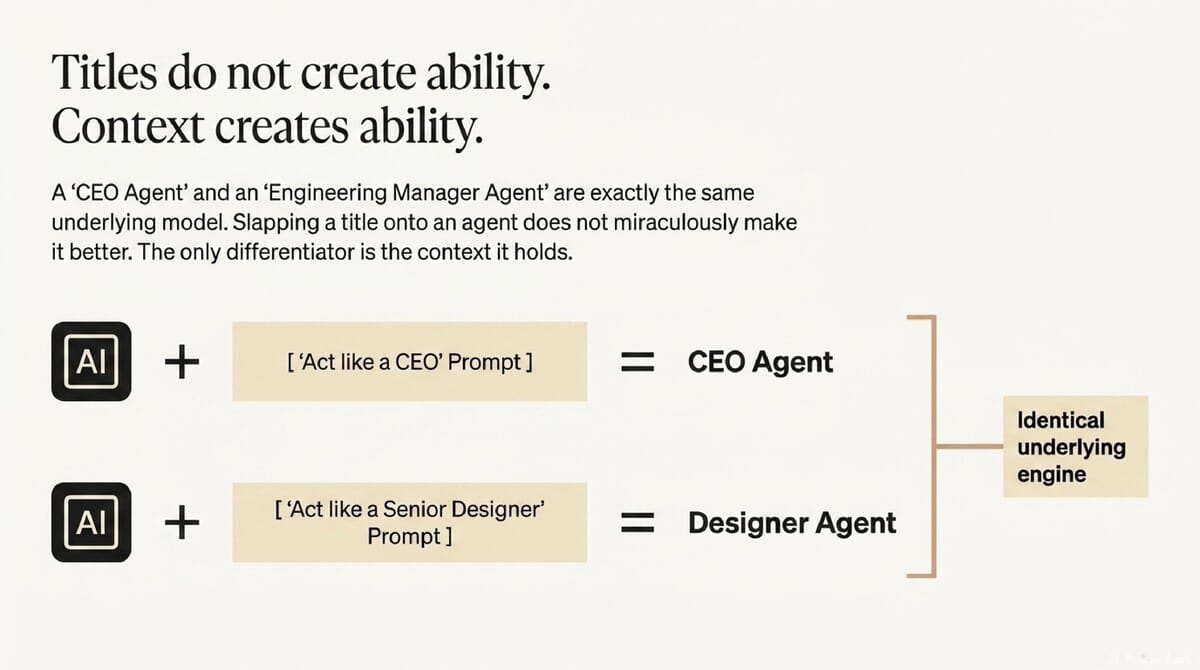

A critical realization for leadership is that giving an agent a title like "Staff Engineer" or "CEO" does not change the underlying model's intelligence. Whether the agent is labeled a "Designer" or a "Manager," it is often the same model—such as Claude 3.5 Sonnet or GPT-4o—running on slightly different instructions. The title is merely a prompt, a cosmetic layer that tells the AI to prioritize certain words over others.

The actual lever for quality is not the "Role" assigned, but the "Context" the AI is provided. When you split a single task into a "CEO Agent" and a "Designer Agent," you aren't getting two different brains working together. You are getting the same brain with two different sets of restricted, siloed instructions.

Leadership Impact: The Strategic Cost of Role-Playing Design leaders must understand that "Context" is the raw material of intelligence. Assigning a "Senior Designer" title to an agent doesn't magically imbue it with 10 years of UX experience if it can't see the previous product requirements or user research. If the agent doesn't have access to the full project history, it will hallucinate or provide generic, shallow feedback regardless of its title.

The goal of a robust AI strategy is "Context-Aware Synthesis," not "Role-Based Segregation." Instead of wasting time refining the "personality" of a CEO agent, leaders should focus on how to feed the AI more relevant, high-fidelity data. Capability in AI comes from the depth of the information provided, not the prestige of the label on the prompt.

Takeaway #3: The "Handoff Tax" and Context Decay

Every handoff is a game of 'Telephone' that kills your product vision.

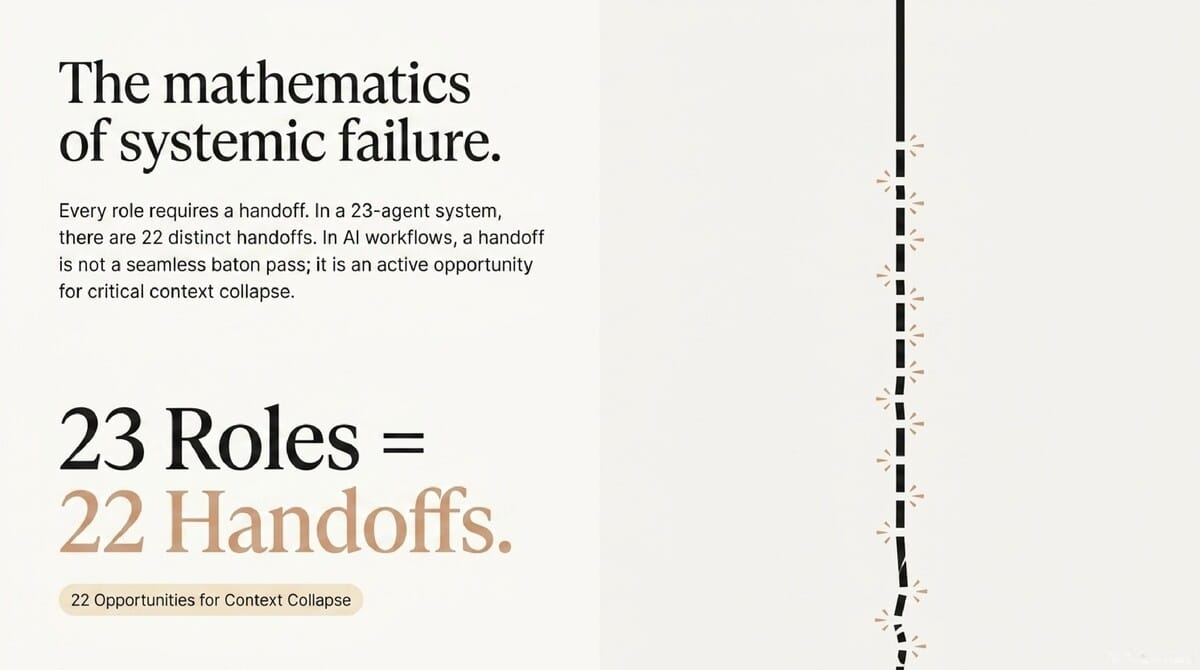

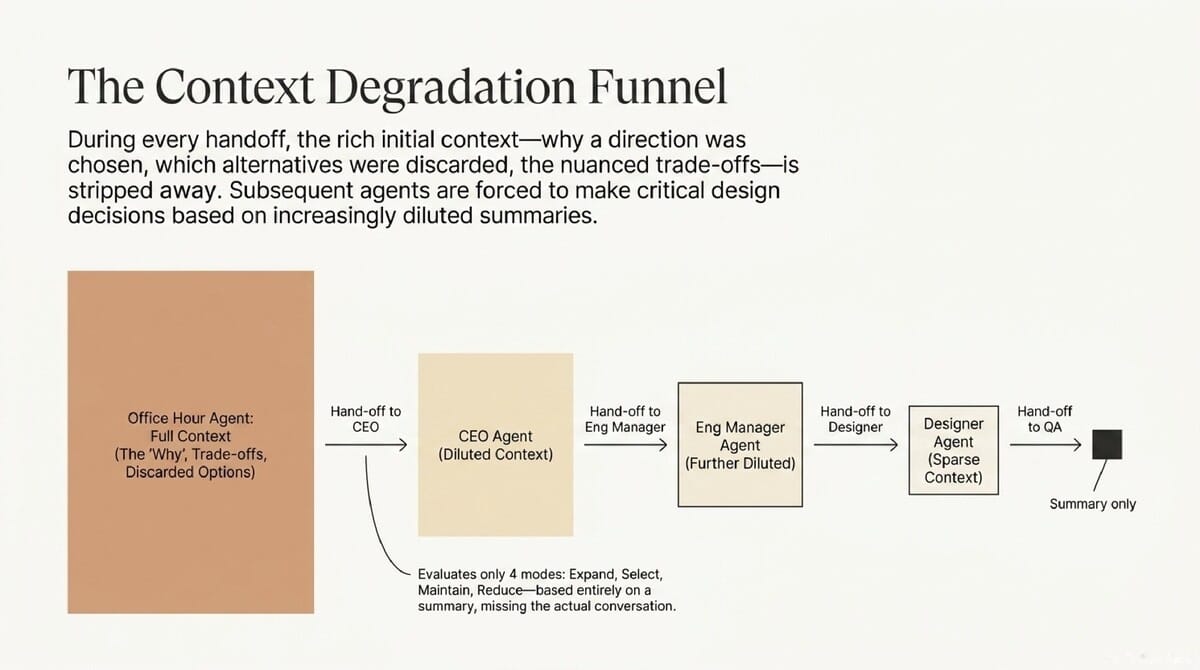

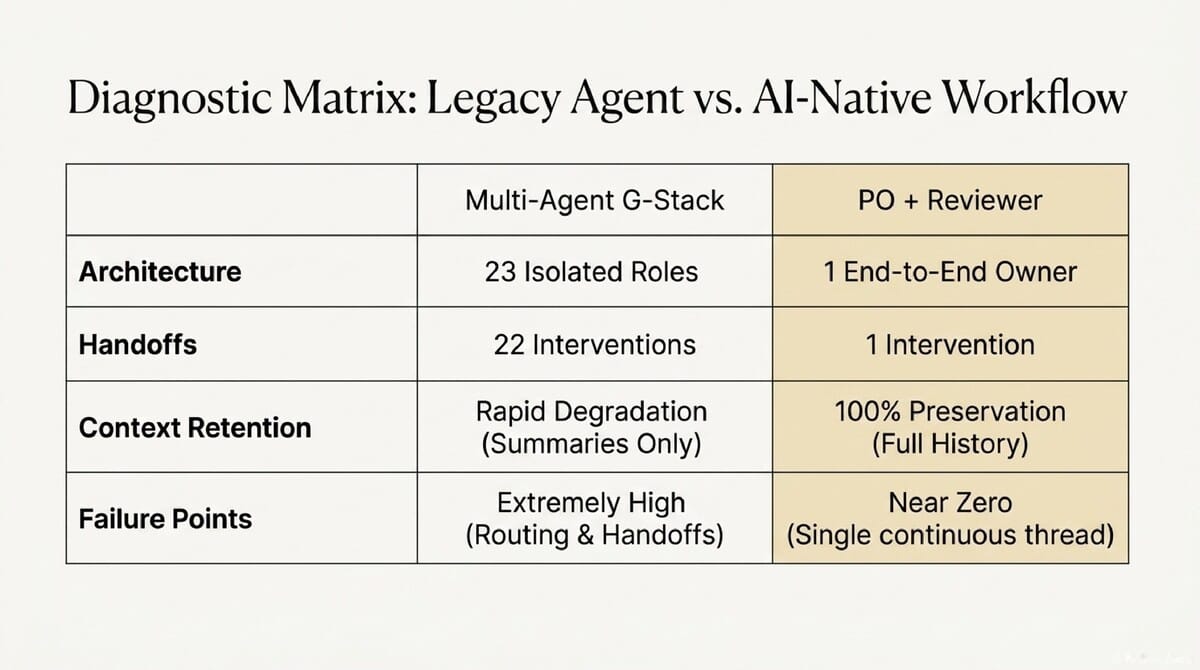

In a structure like G-Stack, which utilizes 23 distinct roles, the workflow is defined by a chain of handoffs. Every time one agent "hands off" a task to another, information is inevitably compressed into a summary. This compression is where the "Why"—the logic, the trade-offs, and the specific design intent—goes to die.

For Design Ops, this "Handoff Tax" is devastating to product quality. Imagine an "Office Hours Agent" spends a session discussing why a specific button needs a 40px hit area to meet mobile accessibility requirements. If that agent passes only a summary to the "CEO Agent," the reason for the 40px requirement is lost; the CEO only sees "Button update needed."

Operational Scenario: The Breakdown of Design Intent In a 23-role structure, the CEO Agent might then decide to "shrink the UI" to fit more content on the screen to satisfy a business goal. Because the CEO Agent never saw the original trade-off discussion about accessibility, it blindly overrides a critical UX decision. This leads to broken components and a fragmented design system that requires human intervention to fix.

Summaries lead to "judging" rather than "building." When agents only see filtered summaries, they lose the ability to understand the nuance of the project's evolution. This results in decisions that feel shallow, disconnected, or outright contradictory to the project's core goals, creating significant technical and design debt.

Takeaway #4: The Lean Alternative—The PO + Reviewer Model

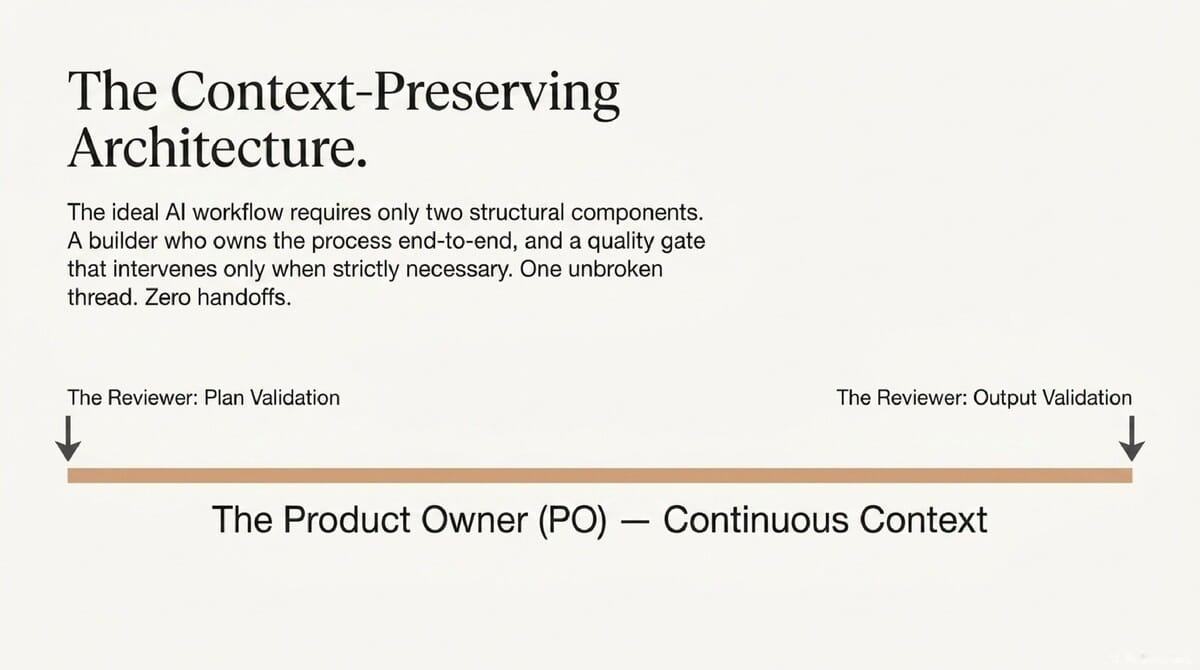

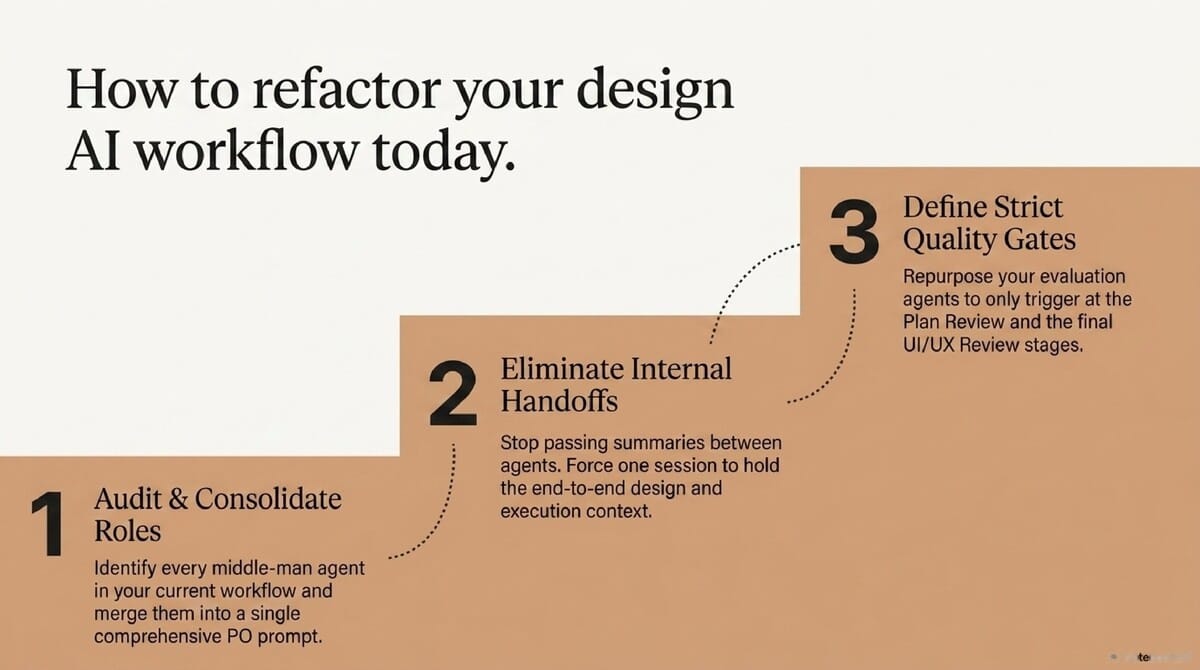

To avoid the pitfalls of "Agentic Bureaucracy," leadership must move toward a structure that is native to how AI actually functions. This means simplifying the virtual org chart to maximize context retention and minimize failure points.

The Two-Tier System: From 23 Agents to 2.

Instead of a 23-role team that mimics a bloated corporation, we suggest a streamlined two-role system:

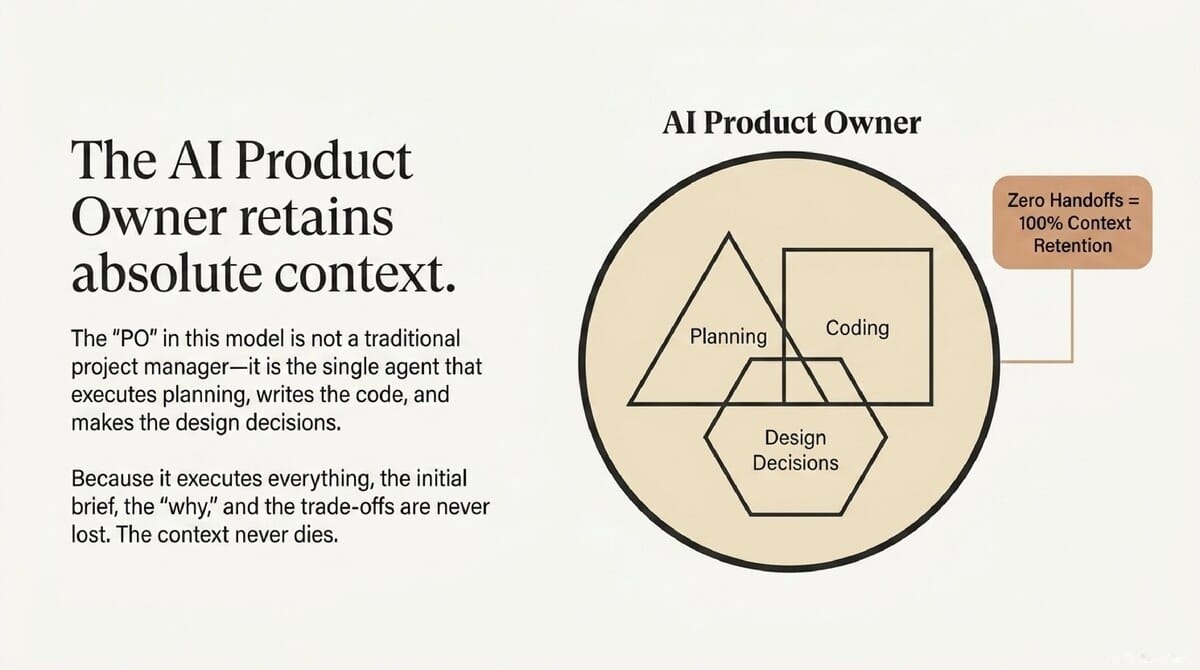

- The PO (Product Owner) Agent: This is the primary builder and context owner. It handles the initial brief, the planning, the design decisions, and the actual code generation. Because it is a single continuous stream of information, there are zero handoffs and zero context decay; the agent that writes the code is the same one that understood the original design trade-offs.

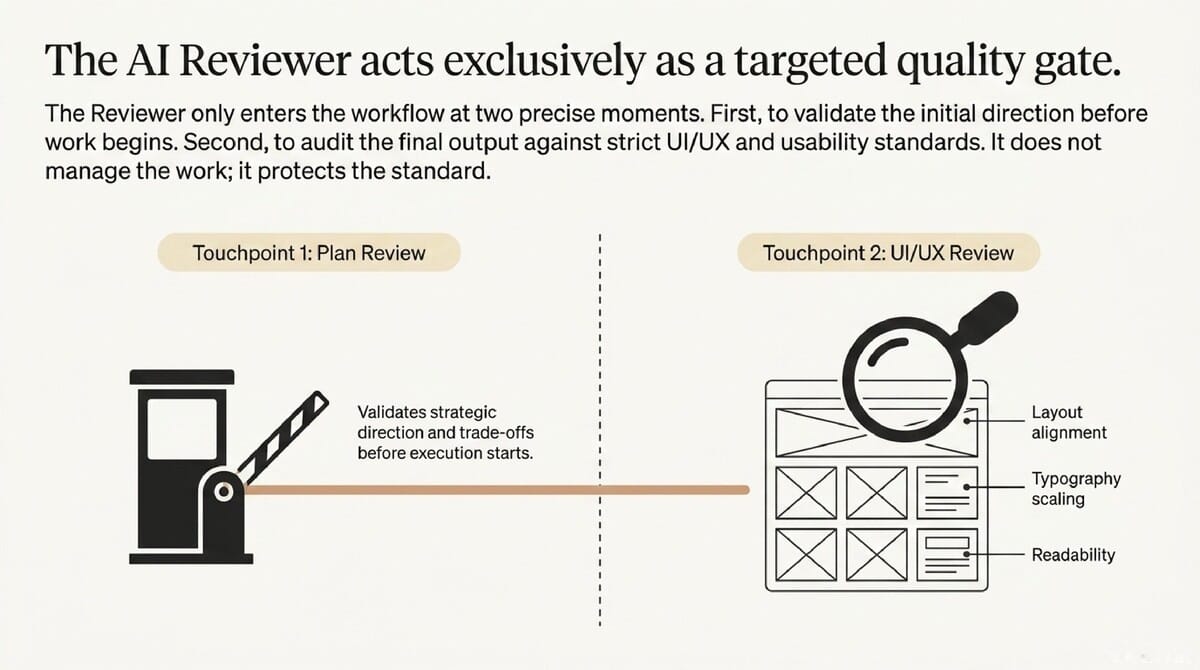

- The Reviewer Agent: This agent acts as a specialized quality gate. It does not perform the creative or technical work but audits the PO's output against specific, objective standards at critical checkpoints.

Leadership Impact: Defining the Reviewer’s Gates The Reviewer Agent is essential because even the best PO Agent can suffer from "vision tunnel." The Reviewer operates at two specific gates: the Plan Review (checking the strategy before work begins) and the UI/UX Review (checking the output for layout, typography, and readability). This ensures that while the PO focuses on execution, the Reviewer ensures the final product adheres to the design system's core principles.

By adopting this model, Design Ops leads can drastically reduce their management overhead. Rather than navigating a complex web of 23 interacting agents to find a point of failure, the leader manages a single output stream. This creates a more robust, high-velocity workflow that produces coherent products with minimal human "babysitting."

Takeaway #5: Complexity is a Failure Point, Not a Feature

Managing your AI agents shouldn't be a full-time job.

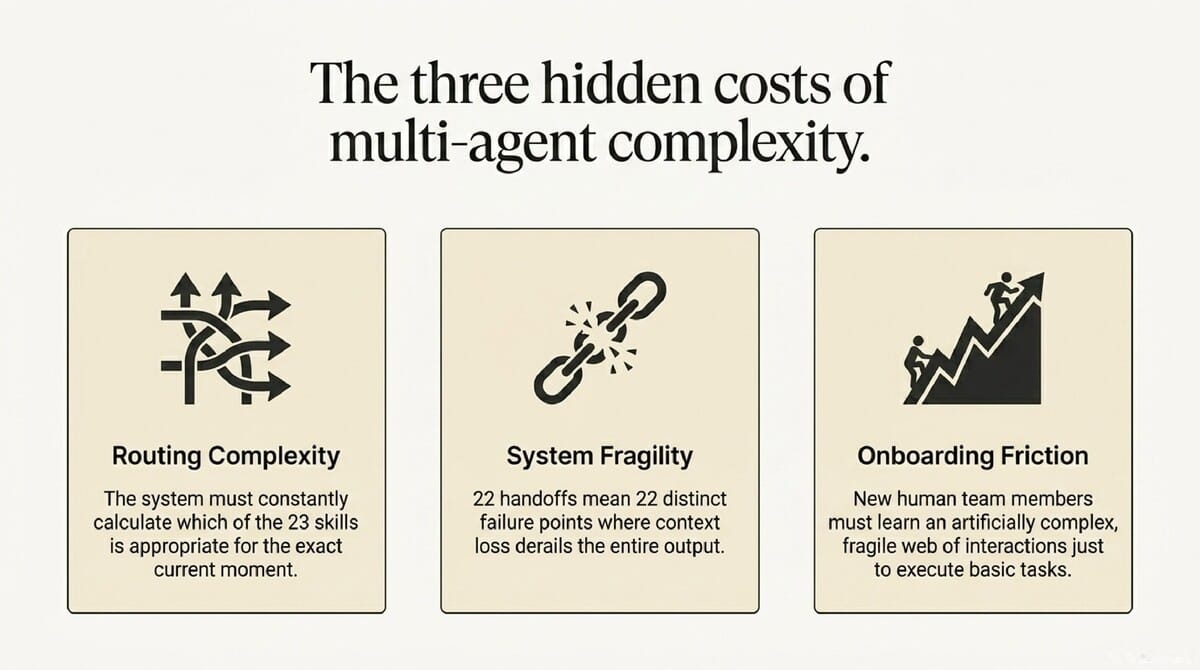

Design leaders and "Vibe Coders" often fall into the trap of believing that a more complex agentic architecture equates to a more powerful system. In reality, every role added to a system is another opportunity for a prompt to be misunderstood or for a handoff to fail. Complexity in AI workflows doesn't create robustness; it creates a "Learning Cost" that eats into your team's actual productivity.

If you have 23 agents, you now have to spend your day debugging the connections between those agents. You have to learn which specific agent to trigger for a typography fix versus a security audit, and you have to ensure the data is flowing correctly between them. This effectively turns a Design Lead into a "Virtual HR Manager" for a team of digital bots that shouldn't need a manager in the first place.

Strategic Advice: Simple is Robust A simple system is easier to monitor, easier to fix, and far more likely to produce a coherent final product. In the PO + Reviewer model, if something goes wrong, the source of the error is immediately clear. You are either dealing with a context issue in the PO or a failure in the Reviewer's quality gate.

Leadership should prioritize "Operational Velocity" over "Architectural Complexity." The goal of AI implementation is to remove friction from the design-to-code pipeline, not to introduce new layers of digital bureaucracy. A robust, lean system allows your human team to focus on high-level strategy while the AI handles the heavy lifting of execution and auditing.

Conclusion: Moving to AI-Native Workflows

AI-native work requires a fundamental shift in our mental models. It means letting the AI be the generalist it naturally is, rather than trying to force it into the specialized, siloed roles we created for humans during the Industrial Revolution. Hierarchies are 2,000-year-old solutions to human information bottlenecks that AI has effectively rendered obsolete.

If AI has solved the problem of processing and synthesizing vast amounts of information simultaneously, we must stop recreating the very bureaucracy we were trying to escape. By moving to a PO + Reviewer model, we allow the technology to work "AI-style"—with full context and zero-loss execution.

As you look at your current agentic workflows, ask yourself a hard question. Are you building an AI team to solve your organizational problems, or are you just recreating a digital version of the corporate ladder? The leaders who embrace simplicity and context will be the ones who achieve true scale and innovation.

FAQ

Q: If AI can do everything, why do we need a Reviewer? A: The Reviewer acts as an objective quality gate. While the PO is excellent at synthesis and execution, the Reviewer provides a "second pair of eyes" to ensure the output meets high-level standards for typography, accessibility, and security that might be overlooked during the fast-paced build phase.

Q: Isn't a 'CEO Agent' better at strategy than a 'Coder Agent'? A: No. In most cases, they are the exact same underlying model (e.g., Claude or GPT). The quality of the strategy is determined by the "Context" and data the agent has access to, not the "CEO" title in the prompt.

Q: What is the biggest risk of the 23-role structure? A: Context decay. Every time information is passed between 23 different agents, it is summarized and filtered. This "Telephone Game" leads to the loss of critical project "Whys" and results in shallow, poorly integrated final products.

Q: How does the PO + Reviewer model handle design feedback? A: Feedback is handled through a "UI/UX Review" gate. After the PO completes the work, the Reviewer audits the final layout, typography, and readability against the original brief. If standards aren't met, the Reviewer provides a single, consolidated feedback loop to the PO for iteration.

Metadata Last updated: May 22, 2024 Status: Final for Publication

📄 Briefing Doc: Technical Analysis

📋 Technical Specifications & Detailed Analysis

Rethinking AI Agent Architecture: Moving Beyond Human Hierarchies to Context-Driven Models

Executive Summary

Current approaches to AI agent orchestration often mirror human organizational structures, assigning specific roles such as "CEO," "Designer," or "Lead Engineer" to different agent instances. This briefing document, based on an analysis of AI implementation strategies, argues that this mimicry is fundamentally flawed. Human hierarchies were developed to overcome cognitive limitations and information bottlenecks—barriers that do not apply to Large Language Models (LLMs).

The traditional multi-role approach (exemplified by frameworks like G-Stack) introduces significant "handoff" risks, where critical context is lost between agents, leading to distorted outputs and increased systemic complexity. This document proposes a shift toward a streamlined "PO + Reviewer" model. This model prioritizes context retention and reduces failure points by utilizing a single Product Owner (PO) agent to handle the entire workflow, supported by targeted review gates to ensure quality and alignment.

Key Themes and Detailed Analysis

1. The Fallacy of Human Organizational Mimicry

The practice of assigning diverse titles to AI agents—such as CEO, CTO, or QA Lead—is a 2,000-year-old solution to a human problem. Humans divide labor because an individual cannot master every domain (marketing, coding, law, finance) or process vast amounts of information simultaneously.

- Human Constraints: Organizational hierarchies exist because human cognitive capacity is limited. We specialize to dive deep and then coordinate those specializations.

- AI Versatility: AI does not share these physical or cognitive limitations. The same model (e.g., Claude) can generate a marketing strategy, write React code, and perform a security audit with equal proficiency. Dividing these tasks among different "titled" agents is an artificial constraint that ignores the core nature of AI.

2. Context Erosion via "Handoffs"

The most significant drawback of a multi-agent hierarchy is the loss of context during information transfers. In systems with dozens of roles, information is passed from one agent to the next in the form of summaries.

- Information Distortion: Much like the "telephone game" in human organizations, as instructions move through layers (e.g., from a CEO agent to a Developer agent), the nuances of why certain decisions were made or what trade-offs were considered are lost.

- Loss of Insight: When an agent works only from a summary provided by a predecessor, its judgment becomes shaky because it lacks the original context of the conversation or the project's evolution.

3. The Illusion of Titles vs. The Reality of Context

Assigning a title like "Staff Engineer" to an AI agent does not inherently grant it superior coding abilities compared to an agent titled "QA Lead" if they are using the same underlying model.

- The Prompting Reality: These roles are often just the same LLM running on different prompts (e.g., "Think like a CEO" vs. "Think like a Manager").

- Context as Ability: The document asserts that titles do not create ability; context creates ability. An agent with the full history and context of a project will perform better than a "specialized" agent that has only been given a narrow, summarized slice of information.

4. Systemic Fragility and Complexity

High-complexity frameworks (such as those utilizing 20+ roles) create three primary types of friction:

- Operational Complexity: Users must decide which of the 23 skills to invoke at any given moment, making the management of the AI a task in itself.

- Failure Points: Each handoff between agents is a potential point of failure. A system with 23 roles involves 22 handoffs, creating 22 opportunities for context loss or error.

- Learning Costs: New team members must learn the intricacies of a complex multi-agent system, including when and how to trigger specific roles, which decreases overall efficiency.

The Proposed Framework: PO + Reviewer

To maximize AI efficiency, the document suggests a simplified structure consisting of only two primary components:

| Component | Role & Responsibility | Timing |

|---|---|---|

| Product Owner (PO) | Owns the entire product lifecycle. Handles planning, coding, and design decisions. | Start to Finish |

| Reviewer | Acts as a quality gate. Reviews the plan for direction and the final output for standards. | Strategic Checkpoints |

The Workflow:

- User Input: The user provides a brief.

- Plan Review: The Reviewer examines the PO's proposed direction to ensure it is correct before work begins.

- Execution: The PO executes the entire project (design, code, logic) without handoffs, maintaining 100% of the context.

- Final Review: The Reviewer conducts a UI/UX and quality check (layout, typography, readability) on the finished product.

Important Quotes with Context

- Context: This highlights the critique of frameworks like G-Stack. The author argues that giving an agent a "CEO" title is meaningless if the agent is denied the full context of the project. The model's power comes from information, not the label in its prompt.

- Context: This serves as the philosophical foundation for the document. It challenges the assumption that AI should work the same way humans do. Because AI can process multiple domains of knowledge simultaneously, the traditional "departmental" approach is unnecessary.

- Context: In the comparison between the 23-role G-Stack and the 2-role PO model, the author emphasizes that every additional agent increases the likelihood of a system breakdown. Simplicity is equated with robustness.

- Context: This concluding thought urges developers and users to stop applying 2,000-year-old human organizational logic to a brand-new technology that has already solved the information problems those hierarchies were meant to fix.

"It’s not about making AI work like a human; it’s about making AI work like AI."

"A simple system is much stronger. Complexity creates failure points."

"The human reason for dividing roles is cognitive limitation... AI is different."

"Titles do not create ability. Context creates ability."

Actionable Insights

- Consolidate Roles: Evaluate current AI agent workflows and identify where "specialized" roles can be merged. If multiple agents are using the same underlying model, consider combining them into a single agent to preserve context.

- Prioritize Context over Summarization: Avoid workflows that rely on passing summaries between agents. Aim for a "single source of truth" where one agent retains the entire conversation and decision-making history.

- Implement Quality Gates, Not Layers: Instead of having AI agents manage each other through a hierarchy, use a "Reviewer" agent at critical start and end points to act as a quality gate (Plan Review and Output Review).

- Reduce Handoffs: Audit your AI systems for "handoff" points. Each time information moves from one agent to another, look for ways to eliminate that transition to reduce the risk of information distortion.

- Focus on the PO Model: For project-based AI tasks, move toward a Product Owner model where the AI is responsible for the "full stack" of the task, ensuring that the original intent of the user brief is maintained through to the final line of code or design.